Nate Silver is a math pundit who founded the fivethirtyeight blog now over at the NY Times. That blog was all about the presidential election and over there he used a series of polls to predict (very successfully) the results of both the 2008 and 2012 US elections. An integral part of Nate’s approach is to use Bayesian probability thinking to keep reviewing the data as it comes in regardless of whether that data is from baseball, a poker game, the US elections or climate change.

Silver’s book — The Signal and the Noise: The Art and Science of Prediction [Fishpond, Book Depository] should be required reading for anyone who needs to review increasingly large tranches of data. Chapter 12 of his book is devoted to the climate change numbers — called “A Climate of Healthy Skepticism”. Part of Silver’s thesis is that many of us can’t sort the noise from the signal.

We have known about the greenhouse effect for a very long time. As he notes politics and other factors have undermined the search for scientific truth in this debate. Back in 1990 the IPCC made two main findings.

-

“There is a greenhouse effect that keeps the Earth warmer than it would otherwise be”

-

Notes to the effect that as concentration of greenhouse gases increases that global temperatures will also increase along with them…

He notes that the switch from referring to the issue as “the greenhouse effect” instead swapping it for global warming or climate change is a subtle one but significant as it moves the debate from discussion of “the causes of change” into the predictive implications and that causes predictably misinformed debate.

He further notes that temperature data (by itself) is predictably noisy.

” A warming trend might validate the greenhouse hypothesis or it might be caused by cyclical factors. A cessation in warming could undermine the theory or it might represent a case where the noise in the data had obscured the signal.”

You could see straight away the problem. We have today in the media a number of self interested groups arguing about whether or not a particular temperature change is moving up or down. That is actually a red herring of sorts since it does not take into account the real issue of greenhouse warming.

To summarise the problem. There would be almost no scientists who dispute that the greenhouse effect is the cause of global warming. There are – however, many commentators who get very excited about every temperature change movement as if these fluctuations discount the central problem.

In my view an analogy would be (this is not in the book) the planet is like a giant slow motion car smash. We all know it is happening but some of us are arguing about whether it is better to crash a red car than a blue one. Temperature changes are an expected outcome but we need to take into account a wider range of factors than just temperature change. And that relationship is not necessarily simple.

Disappointedly much of the debate is about the effects of global warming despite almost no one disagreeing with the cause.

Nate went to the Copenhagen summit in 2009 and was able to talk with some of the delegates there. He also noted that in 2011 alone the fossil fuel industry spent more than $300m on lobbying activities.

So to the computer modelling and the data – what did Nate find?

What matters most, as always is how well the predictions do in the real world. He notes the 3 different types of uncertainty in building a climate forecast model. These are initial condition uncertainty, scenario uncertainty and structural uncertainty.

All of this means that on a snapshot of any less than 25 years at a time the level of noise may outweigh the overall signal. The problem then becomes if the IPCC (as they did) makes forecasts on shorter time scales then they will be wrong because these structural uncertainties can not be eliminated.

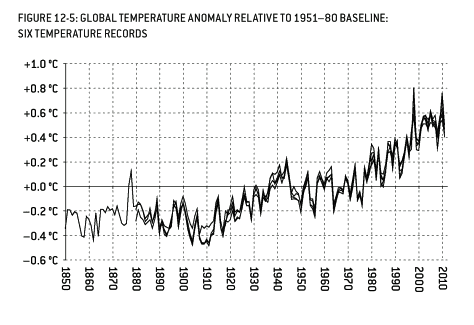

Silver goes on to show various graphs of temperature changes and SO2 (sulfur emissions) since 1850 and 1900 respectively.

Global warming does not progress at a steady pace. Silver makes the point that temperatures are going to fluctuate up and down but with an overall trend of long term increase. I encourage you to read the book as there are more graphs and calculations in there that I can’t easily reproduce here. (indicative graph below.)

For example (page 407):

“Under Bayes theorem a no-net-warming decade would cause you to revise downward your estimate of the global warming hypothesis’s likelihood to 85% from 95%.

The extreme mix of politics and science over causality in these forecasts means that forecasts are going to continue to be wrong but that does not negate the overall thesis of greenhouse warming that is causing long term damage to all of our life systems.

“In science, one rarely sees all the data point to one precise conclusion. Real data is noisy- even if the theory is perfect, the strength of the signal will vary. And under Bayes’s theorem, no theory is perfect. Rather, it is a work in progress, always subject to further refinement and testing. This is what scientific skepticism is all about.”

At the end of the chapter on climate change he makes the point that much of the argument is to do with the black and white nature of politics. Politics is very much about beliefs and ideology and just can not cope with the intrinsic uncertainty in the forecast models.

In short if we make the argument about temperature changes alone then we risk discounting the real complexity of global warming and unnecessarily confusing that with the very real consequences (of which one is temperature change.) The The Signal and the Noise: The Art and Science of Prediction book is about much more that just this one chapter but it did give me a useful perspective on the underlying complexity of predictions.

At the very real risk of over simplifying a chapter that is 50+ pages – predicting the outcomes over the short term is quite uncertain and if you use Bayes theorem you just keep re-running the numbers to account for that uncertainty. The real problem for most is that politicians and the public discount global warming because of what they see as failed predictions on the temperature line.

There’s a good post by Ed Hawkins here that explains the difference between short-term variability (weather) and long term trends (climate). Shows some model predictions too. http://www.climate-lab-book.ac.uk/2013/what-will-the-simulations-do-next/

Like your red or blue cars, Gareth, I’ve been explaining to sceptics that they’re on the sea shore arguing about whether the tide has stopped based on the sequence of waves coming up the beach. It’s pointless trying to decide by looking at the last five, ten or even sixteen waves. But watch long enough and the speed the tide is coming in will become very clear. In other words, the noise is a distraction to be ignored.

I’m bemused at the tide analogies that keep appearing now. At 12 yrs old I was up in some high coastal hills north of Gisborne when someone unseen fired both barrels of a shotgun at me. As the shooter was between me and home I had to detour to a place where I could reach the sea about 8 km from home then make my way back under the cliffs with a rising tide. With about 1.5 km to go to open beach the biggest waves were just reaching the cliffs so at each small point I spent time perched on the rocks counting waves and observing the patterns to identify the point at which I could dash at full speed to the next point – gave me a feel for rising tide with waves analogies. 🙂

Thanks John,

Its an imperfect analogy but one of key points is that public has doesn’t understand uncertainty at all. This is a long term issue but we need to take short term steps to lessen the consequences. Weather is a complex system and in another chapter of the book Silver talks about how well we are doing there. The book also covers baseball, elections, earthquakes, poker and other topics from a probability perspective.

(BTW it was me not Gareth that wrote this review. )

Yes; apologies, Jason. Like a climate ‘sceptic’, I jumped to a hasty conclusion. Though unlike a climate sceptic I admit my error. 🙂

Yes, I know that the tide analogy is not perfect but then how can any analogy be perfect?

By the way did you see Michael Mann’s article on Nate Silver’s latest work? Worth reading. Nate Silver is to be read with caution on climate: http://www.huffingtonpost.com/michael-e-mann/nate-silver-climate-change_b_1909482.html

Jason – I’ve always appreciated Tamino at Open Mind for sorting out the numbers with such clarity. Maybe they are mathematical relatives?

Thanks Noel, I don’t know if there is a connection. One of Silver’s key approaches is to use Bayes Theorem on the data. Anyone with some math knowledge can research that as a way of recasting probability. It seems like a very smart approach to me.

Tamino is well versed in where Bayesian analysis is appropriate: http://tamino.wordpress.com/2010/03/24/bad-bayes-gone-bad/

Tamino is an acknowledged expert, with climate papers under his belt: Foster and Rahmstorf (2011). [Tamino is Grant Foster] If you like statistics his blog is recommended.

Jason, I don’t think Bayes is a “recasting” of probability, but more another branch of the subject. The more commonly held view of Stats in the early 20th C is now known as “frequentlst” stats. which is purely an objective view of data. There has been a “frequentist vs Bayesian” clash ever since Bayes cast his theorem in the 1700s. I think even today there are “Bayesian zealots” who may use Bayes when not appropriate. Tamino even mentions these zealots in the link provided by John.

Bayes works well with sparse data and where there is a prior set of beliefs or knowledge to work with.

One area I am trying to get to grips with is the idea of subjective vs objective Bayesian thinking. “Subjective Bayesians” use some kind of personal or subjective belief as a prior probability.

To me, this sounds like non-objective science, but I am advised that there is a lot of subjective Bayesian reasoning in climate modelling. I’d be keen to hear from anyone who can explain this to me.

Dave Frame, at Victoria Uni, has done quite a lot of work in this area. Dave commented on this blog not too long ago.

His 2005 paper “Constraining climate forecasts: The role of prior assumptions” gets cited quite a bit.

http://www.climateprediction.net/science/pubs/2004GL022241.pdf

I would also recommend “The Theory That Would Not Die: How Bayes’ Rule Cracked the Enigma Code, Hunted Down Russian Submarines, and Emerged Triumphant from Two Centuries of Controversy” as a good read on Bayes, suitable for the general reader.

And I just bought it!

You’d want to read Michael Mann’s piece (FiveThirtyEight: The Number of Things Nate Silver Gets Wrong About Climate Change) about Mr. Silver’s climate chapter before suggesting his book as “should be required reading”, for anyone.

Thanks David, I think it is a great book on dealing with data and quite a few topics. The chapter on climate change was unexpected but I thought it was worth writing about. After a quick read of Mann’s response it does seem that Silver has missed some of the complexities but personally I was more interested in the math. In the context of the book it is 1 chapter and not the main topic at all which is why I found it to be required reading. I note that Michael Mann is still a FON ( fan of Nate) but not on this topic.

I found Michael Mann’s account of Nate silvers book profoundly depressing. At no point in the HufPo article did he address any technical issues, but preferred to focus on ad hom attacks that he might have attended lectures by free market advocates, etc

I haven’t read Silvers book, but as someone who has recently found a new enthusiasm for the statistical fundamentals behind climate science and Bayesian stats in general, I might actually read it. Michael Mann’s strawman arguements don’t deter me from this and really don’t endear me any more towards Mann who seems to have some fairly serious issues.

“There would be almost no scientists who dispute that the greenhouse effect is the cause of global warming.”

I could agree with this sentence if the definite article was replaced by an indefinite article. The greenhouse effect is one of the causes of surface temperature change. At times it may be a determining factor, but at other times (like the last decade) it is swamped by others.

Because it moves doggedly in one direction, while other factors are presumed to be random, logic suggests it will generate a persistent upwards tendency. But the magnitude of that tendency is not only uncertain … it is just unknown. All depends on such mysteries as clouds and aerosols.

As Silver suggests, a decade of no-warming brings about a substantial reduction in theoretical or modelled certainty that the greenhouse effect will prevail over other factors. If the UK Met Office is right, there will be two decades of no-warming – which will probably more than double the reduction in certainty.

I’ve just bought the Kindle edition and look forward to wading through Chapter 12.

Sorry you’re wrong, Australis. You’re working on the basis that the rise in temperature is driven randomly and our observation of what is happening to surface temperatures is the only information we have to go on. It’s not.

The greenhouse effect is a scientific fact—I think you will agree that—and it’s driven by the concentration of CO2 in the atmosphere. That CO2 is steadily rising: http://climate.nasa.gov/key_indicators

Consequently unless 1) the Earth’s orbit round the Sun changes unpredictably in the future; 2) the Sun’s output varies unpredictably, or 3) we manage to bring our GHG emissions under control*; then, as sure as eggs is eggs, our planet’s temperature has to go up. Short-term variability is just that; short-term. This means that—just like if you get a run of heads when you flip a coin—at some point soon that natural variability is going to cause the surface record to start climbing dramatically to a new and astonishing peak. This is what happened in 1998.

The CO2 concentration is building in the atmosphere, the heat is accumulating in the oceans—indeed it’s even accumulating in the Earth [ http://www.ncdc.noaa.gov/paleo/borehole/core.html ]—so don’t become too fixated on the surface temperature record.

*There are many who would say that the likelihood of any of those 3 situations arising are about the same.

Yes, I agree that the greenhouse effect is a scientific fact AND that CO2 is steadily rising. AND I agree that the planet’s temp has to go up unless one of the three mentioned things were to happen.

From there, how do we get to the axiom that “natural variability will [soon] cause the surface record to “start climbing dramatically to a new and astonishing peak”? Where are the missing steps, John?

Natural variability is at the moment working against the underlying trend masking it’s inexorable climb, but at some point soon it has to start going with the underlying trend and emphasising the climb.

Imagine the graph like a boat in the harbour bobbing up and down on the waves as the tide comes in. A dozen smaller waves in a row and it would look like, in relation to the harbour, the boat is not rising much on the tide; but then along come a few bigger waves and again the boat’s rise in relation to the baseline, the harbour wall, becomes very apparent.

You seem to be a bit confused, there, Australis. The greenhouse effect is simply a model of how the atmosphere works. It is changes in forcings that cause surface temperature change, and there are several forcings that can cause this, including GHGs, solar irradiation, aerosols and so on. All of these forcings are mediated by the greenhouse effect, regardless of whether we consider the forcing to be “natural” (e.g. changes in incoming TSI) or “man-made” (e.g. CO2 from burning fossil fuel).

These changes due to forcings are the signal, and will continue for as long as the forcings are causing a shift to a new equilibrium state. The noise comes from internal variability, which can only mask the signal, not alter it. Even if the noise is all one direction for a while, sooner or later the signal will assert itself. When that happens, a lot of people are going to look rather foolish.

It would be appropriate for Mr. Silver, because his book is entitled “The Signal and the Noise”, to show some awareness of what the climate “signal” is, if as he’s going to discuss climate science and some of the predictions of climate scientists. As Kevin Trenberth, who was the lead author of the IPCC AR4 Scientific Assessment, points out, 20 years is the shortest period the IPCC AR4 took as a valid period of time over which the climate signal can be said to emerge from the noise when examining the global average surface temperature chart.

As Michael Mann pointed out, Silver “repeatedly falls victim to the fallacy that tracking year to year fluctuations in temperature (the noise) can tell us something about predictions of global warming trends”.

Silver highlights the views of Scott Armstrong by discussing a 5 year old bet Armstrong attempted to make with Al Gore.

Scott Armstrong is a professor of marketing at the Wharton School. His critique of climate science (from his 2001 book Principles of Forecasting: A Handbook for Researchers) is for example: “we have been unable to find a single scientific forecast to support global warming”. He opined on sea level rise as well: “to date we are unaware of any forecasts of sea levels that adhere to proper (scientific) forecasting methodology”. Its quite a critique. Another way to describe it: no one involved in climatology is a scientist, except Armstrong, a non climatologist.

Silver makes it crystal clear what he thinks of this type of criticism, telling us in the chapter, entitled A Climate of Healthy Skepticism”, in a paragraph that starts out with the sentence “this healthy skepticism is generally directed at the reliability of computer models”, that Armstrong “is such a skeptic”.

Now the fact is Armstrong actually wanted to bet Gore that Earth’s climate will never change again.

Given what paleoclimatologists have discovered about how radically Earth’s climate has changed in the past, i.e. from a “snowball Earth” almost completely covered with ice to an ice free planet with alligators living north of the Arctic Circle, one might ask what is a discussion of Armstrong’s assertions doing in a section supposedly discussing “healthy skepticism”?

Would Silver discuss as “healthy skepticism” of his political polling method the views of someone who was publicly accusing Silver of knowing nothing because Silver wouldn’t take his bet that no one would win the next Presidential race?

Yet Silver duly publishes his “Figure 12-2 Armstrong-Gore Bet” graphic showing the progress of the Armstrong bet over the 5 years it has existed. Armstrong, who Silver writes has admitted in public that “I actually try not to learn a lot about climate change” duly forecasts that climate will never change again. Gore, although he didn’t bother with the bet, has said climate scientists are almost unanimous that Earth’s climate is warming at an alarming rate. My god, Silver’s chart shows, after 5 entire years of data, it looks like Gore is losing. This matters? At no time does Silver give us a wink and tell us he’s kidding. Silver doesn’t point out that looking at 5 years of global average surface temperature data isn’t a long enough period over which to see what the “signal” is.

This is valuable discussion aimed at helping us understand what is “signal” and what is “noise”?

Hansen’s latest communication explains what is driving the “signal”. As Hansen writes, all the ballyhoo over what the global average surface temperature chart is showing us can only be explained at this point by saying it is “noise”. All analysis of the longer term trend in this chart, i.e. in the relevant time frame selected as the shortest meaningful interval by the IPCC AR4, shows continuing global warming. The planet has been measured to be out of energy balance, i.e. there is more energy is coming into the system than is going out, which is proved by the latest data from measurements of the global ocean. (Hansen cites Hansen et.al., Earth’s Enerby Imbalance, which depends on the Von Schuckmann et.al. analysis of the Argo data).

In all his writing about the history of climate science prediction, it does not seem significant to Silver that as Trenberth has written, there has been a “revolutionary” improvement in our ability to measure what is going on in the planetary system in the last few years.

Hansen, from his January 2013 communication: “The continuing planetary energy imbalance and the rapid increase of CO2 emissions from fossil fuel use assure that global warming will continue on decadal time scales”.

Silver would have done better had he thought about what the “signal” is.

When people discuss “global warming” what they mostly focus on is the global average surface temperature chart. It is a significant piece of data, but it is worth remembering where 90% of heat that is accumulating in the system due to the measured energy imbalance is going. One of the most significant fluctuations in the global average surface temperature chart comes from changes in ocean currents driving changes in the surface temperature of the Pacific Ocean, i.e. El Nino/La Nina. On the other hand the global ocean just steadily heats up. The global average surface temperature chart, which is very noisy on the time scale Silver focussed on, is a tiny tail on a big dog, the steadily warming global ocean. That’s the signal. That’s why Hansen can say “global warming will continue”, as opposed to maybe it will continue. More “noise”, unfortunately, are chapters like Nate Silver’s “A Climate of Healthy Skepticism”.

I think you are reading too much into a chapter title but I agree “Silver would have done better had he thought about what the “signal” is” that is what I was looking for. However when I read Mann’s review I felt like I was reading about a different book. I didn’t feel like the Armstrong material was so prominent at all. What I was most interested in was the process & the math. As various readers have pointed out the analysis is not as good as it could have been regarding what is signal and what is noise.

What all of this means to me is that when an uber nerd like Silver apparently misses of sorting noise & signal then the overall communication of global warming is not clear to the general public. In very simple terms though what I got was the idea that fluctuations in the short term (meaning 20 or so years) were not that significant to the overall trend.

Now that I’ve seen the graph over at http://hot-topic.co.nz/still-warming-after-all-these-years-again/ I can see what is causing some of the fluctuations.

There’s some ‘talking at cross-purposes’ going on in some of the comments above. Just for the record, apparently it is a different book, Jason. You’re writing about ‘The Signal and the Noise: The Art and Science of Prediction’. Mann is writing about Nate Silver’s new book, ‘The Signal and the Noise: Why So Many Predictions Fail-but Some Don’t’. http://thinkprogress.org/climate/2012/10/08/970541/nate-silvers-climate-chapter-and-what-we-can-learn-from-it/ < This is the review by Dana Nuccitelli of SkS: worth reading. The titles are very similar but if you look at the illustration of the book cover they're different.

Thanks John, I read the kindle version so never saw a cover but there is only one book. I think it is just different backgrounds in play. I’m more familiar with economics where the modelling is more random. If I read some of the comments correctly climate modelling may not be the best place for a Bayesian approach.

Thanks John for the Dana Nuccitelli review. That review is more generous in my opinion.